Silicon IP Cores

IoT Phase 2: Design Matters

by Bill FInch, SVP, CAST, Inc.

The next phase of the IoT is about to start. What most distinguishes it from the first? Clever design, using the right IP.

In the first phase, there was little clarity about what functionality really mattered. Engineers were tasked with getting products out ASAP. Because of the uncertainty and rush, early products were mostly built around off-the-shelf parts made by IDMs (Integrated Device Manufacturers). The emphasis was on getting things working under the new “IoT” umbrella, not on optimizing against more specific criteria.

This will not be true in the second phase.

In many markets, we will see a resurgence of custom SoCs being designed with a much clearer concept of what will differentiate these products from the competition. We always knew that power consumption in edge devices was a big deal, and now we know—with a great deal of certainty—what activities consume power. Optimizing to deal with these functions will now be paramount.

Wireless transmissions, no matter the frequency or standard, have to be optimized. There is, however, a limit to what can be done. Sooner or later you have to turn on the radio. Therefore, the best approach is to process the raw data at the source to reduce its size. This means far more compute power will be needed at the edge than most people originally believed.

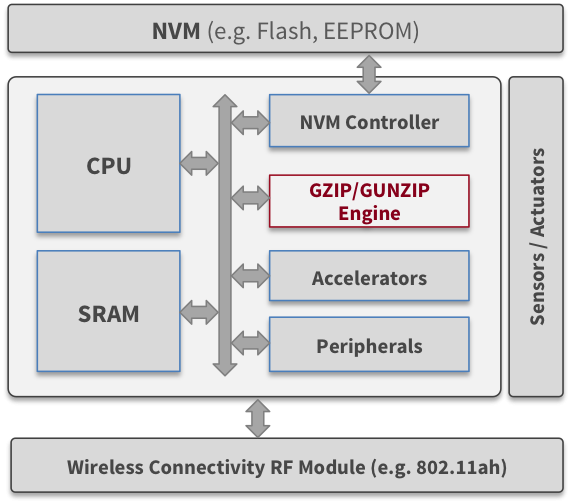

More computing at the edge also means more software at the edge. More software means bigger memories, which then consume more power. So controlling the size of the on-board memories requires new thinking as well.

One approach to meeting the challenge of optimizing power across the chip is to break the design down into sub-systems, each highly optimized for a particular function. Making this strategy work will require tiny processors that can each perform a single task very efficiently and then go to sleep immediately, once their job is done. Trying to force-fit a processor designed with the typical 32-bit architectures of pipelines and caches will not be good enough. (An excellent example of this new mindset is the BA20 “PipelineZero” 32-bit Processor.)

Another low-power approach is to optimize the video that many IoT applications capture, send, or display. Excellent codecs and hardware IP for video compression have been popular for years, but the IoT needs something different, not so much broadcast quality but rather good enough quality, but with very much use of power and silicon resources. Newer IP portfolios support this approach such as the latest generation of Video and Image IP Cores available from CAST.

Another way to reduce power and shrink the size of the main on-chip memories is to take a cue from the Data Center experts: use a data compression strategy. This is a well-known and proven technology.

By compressing the firmware when writing, decompressing, and reading, memory requirements can be substantially reduced and a significant amount of power can be saved. Unlike in the Data Center where processing power is abundant, when this technique is applied at the chip level it must be done with hardware accelerators. For a detailed example of how this works, see the conference paper Innovative Energy Savings Using GZIP IP Within IoT Devices.

Beyond technical issues, success in the second wave of the IoT requires that costs come down significantly. Well thought out designs that can achieve the kinds of economies of scale typically seen in consumer markets will be necessary for corporate success and profitability. Additionally, designing products that have a bill of materials with substantial built-in royalty streams will hold back success and lead to what in earlier generations was known as “profitless” prosperity: wildly successful products with margins so thin that no one made money.

Still, it remains to be seen whether the consolidation wave that has swept through the industry is going to lead to successful second-wave designs.

Most of the M&A of the past few years was focused on building products for smartphones and tablets and other leading edge consumer devices. These designs were giant multi-processor SoCs that required the latest bleeding edge process nodes to work. They required specialized design skills and large design teams. Today, however, such a hugely expensive infrastructure is not at all necessary to build many of the optimized IoT designs that define the second wave.

That is why it is highly likely we will see a return to the days when a few smart people with the right ideas can actually bring to market successful designs built on much cheaper processes. This might be the classic “two guys in a garage” using FPGAs or small start-up teams taking advantage of the latest tools and methods to produce more complex ASICs or SoCs. Being free of corporate structures that dictate choices in IP and tools will allow a more creative design approach and more cost effective—read “profitable”—IoT solutions.

Certainly one could ask, will funding be available? The answer depends on whether the Angels, the VCs and their brethren, can return to the days when they willingly took reasonable risks to fund people with a vision. The IoT will not be built on apps.

We’re not there yet, but the second wave of the IoT is coming. The time to prepare is now.